The title of this article may seem somewhat prosaic, but given that it really is about birth after death it seems appropriate. For I really did die on July 25 2022, and that which came back to life was not the same person, and certainly not the same doctor.

Prior to 2020 I hadn’t asked the question: ‘what is a doctor?’ I entered medical school to escape working class powerlessness, and successfully developed unhealthy delusions of grandeur reveling in a body of knowledge that I now know to be about as substantial as clouds. I did have some moments of sober reflection during my undergraduate days, but they were not in Dublin. Rather, the people and doctors of Moscow taught me to see the world from a different perspective. I have no love of Soviet-style Communism, and no wish to eulogize it, given the millions of lives lost or destroyed, but the sense of classlessness I experienced in the Russia of 1990 was liberating. It was a feeling that soon evaporated on returning to the ‘land of the free.’

Reflecting now on how I practiced medicine, I think that it was fortunate that for much of that time I worked in low-risk environments. This was fortunate for the patients who encountered me at that time. Despite my paucity of knowledge and practical skills I succeeded in doing some good by listening and tried to understand complex human relationships, and the societal forces shaping these. With that perceived limited skill set – perhaps created by impostor syndrome and the pressure of the short duration of time per consultation – one invariably becomes a conduit for the distribution of pharmaceutical products. The quick pattern recognition followed by the reflexive use of the prescription pad. I was getting well paid. I was doing the same as my colleagues, or at least that’s what we told each other in practice meetings, and all was right in the world.

Of course, I never really questioned what world I was actually referring to, my own or my patients. On reflection I chose willful blindness over open scepticism, a strange position to take for a young man brought up in Ireland since the 1960s. This was a country that showed clearly – at least to anyone who chose to look – that those in power and positions of authority had feet of clay. That period revealed clerical abuse, government corruption and waste, medical malfeasance in the form of vaccine experiments and the selling of children to wealthy Americans in collusion with the Church. Then we had the banking and economic collapse leading to the selling off of the country and its sovereignty, and more recently the Covid-19 scandal. Why did I think that the biomedical model served anyone other than those corporations and professions earning vast profits from illness?

Awakening

A growing cynicism and scepticism coalesced into an awakening on St Patrick’s day March 17, 2020 when then Taoiseach (prime minister) Leo Varadkar paraphrased Winston’s Churchill’s World War II speech: ‘never in the field of human conflict was so much owed by so many to so few.’ It was then, to quote Emily Dickenson, that I felt “a cleaving in my mind”. The juxtaposition of such incongruent images as the much loved and revered patron saint of Ireland with his herpetology skills, and the current barely re-elected and much reviled Taoiseach conjuring up images of the London Blitz when speaking about an impending wave of beta-corona virus infections recalled a Monty Python sketch.

The more I listened to mainstream media in Ireland that mainly consisted of the state-funded Raidio Teilifis Éireann (RTÉ), the more the absurdities flowed and the cleft grew. Eventually, this dislocated myself and a few like-minded colleagues from the rest of our colleague’s apparent embrace of what to us seemed a clearly fabricated, dystopian reality. Doctors shut their practices, refused to see or treat patients because the Irish College of General Practitioners told them that there was no treatment available. Yet, the HSE had been claiming that hydroxychloroquine was effective in treating Sars-CoV1, from 2003, sending a circular to pharmacists suggesting they stock up on the drug and reserved it for treating patients in hospital with Sars-CoV2.

Who thought that this was ethically and morally appropriate? The rest of society followed suit accepting with slack-jawed-gormlessness curious phrases such as ‘apart together’,’social distancing’,’flatten the curve,’ along with the ultra-dystopian ‘build back better’ and the ‘new normal’. What did any of these inane statements even mean?

Societal strategies such as mandatory mask-wearing were inflicted with the emphatic certainty only fools can generate and even bigger fools gorge themselves on. Masks of any material, worn walking through restaurants, but not seated, even masks for solo journeys in cars. Then we had the perspex screens over which, apparently, viruses couldn’t jump, the safe purchasing practice of beer and crisps, but not socks and shoes, within the same department stores, and the viral-repellent Nine Euro Meal, along with the destructive removal of children from school for months.

The sacred was not spared the ravages of this banal evil. Burials were in closed caskets, while no wakes were allowed, and only a ‘safe’ few mourners were permitted; weddings were cancelled, and masses went uncelebrated.

The medical profession adopted its own dystopian practices such as artificially ventilating cases initially, at least until they realised they were actively killing people. Within general practice the main concern expressed on a well known GP support website was the potential loss of income if we couldn’t see patients. Any attempt to discuss the ramifications of drastically altering the daily rhythms of society was met with ridicule, and dismissed as irrelevant. After all, this was a pandemic and we could lose a substantial amount of our income! Later, when the topic of vaccine adverse events were raised, many of the same people urged us to shut up and vaccinate.

Nursing Homes

Meanwhile, in the nursing homes around Ireland, the elderly were left alone, unloved, unvisited and untreated unless it was end of life care. How ironic and criminally sad that these people should be treated this way for ‘their own good’.

A personal story about a patient of mine may bring home the human tragedy. Jim and Mary were married for close to sixty years. Mary was moved to a nursing home after her dementia worsened to a point where she could no longer be cared for at home. Once that happened Jim visited her every day. Speaking to him after several of these visits he expressed his frustration at her memory loss. Then one day after a visit he came out and told me that he discovered that Mary had excellent recall of the events of their early life together, so he would just talk about those memories. For a while he had the woman he married back.

Then the nursing homes prevented people visiting on account of Covid. Neither the residents nor their families were asked for their permission to be separated. Jim still visited everyday but he would come away frustrated. Mary would be placed in the window, like a mannequin, and Jim would stand outside. On a sunny day he would stand there looking at his own reflection, unable to see his wife.

Jim was finally allowed in to see Mary, but by then she was on her death bed and was unable to share any memories or even say goodbye. This was for the greater good of course.

What wasn’t used for anyone’s ‘ good’ were treatments such as Ivermectin and hydroxychloroquine despite emerging evidence of efficacy from around the world from reputable clinicians. Curiously these ‘reputable’ clinicians rapidly became disreputable, despite decades of blemish-free clinical service to their patients. Some had very respectable research and academic careers. Yet, they became outcasts, renegades, not to be trusted according to the ‘fact-checkers.’ This latter group of reprobates turned out to be captured academics with vested interests in protecting certain ideologies or social media companies, pressurised by the U.S. state department and FBI to suppress all ‘thought crime’.

But One Hope

Fear was thus weaponised as the great and the good climbed aboard the gravy train and stoked fear until a mental paralysis gripped the nation. Any dissenting voice was dismissed as selfish and lacking a social conscience. We had but one hope: the vaccine, which was arriving at ‘warp speed,’ while Ursula von der Leyden was exhausting her texting thumb making sure that we in Europe would be saved.

Everybody would be rescued, whether they wanted it or not, and sure who wouldn’t want a novel pharmaceutical product that was still in phase 3 of clinical trials. Trials that were confounded by giving the placebo arm the product, a product never before used successfully as a vaccine. This was a product for whom the English language had to be subverted in order to accommodate it. Only the insane or the selfish would not want to be rescued, and we don’t want those type of people in our ‘new normal’ world was the message that came from politicians, celebrities and doctors via a complicit media. They pleaded for all our sake to get vaccinated. These were people who at any other time would not give a moments reflection to inordinately long waiting times in our public hospitals, the overcrowding in our prisons, the record levels of homeless children, or the plight of the working class suddenly wanted to embrace collectivism, and ideas about humanity sharing the burden of this ‘pandemic.’ And it worked. Beaten down by fearmongering propaganda and the mind-numbing effects of Netflix, beer and pizza most people walked towards the light, or rather what they were told was the light.

As of 2025 homelessness in Ireland is at a record high, along with immigration and the cost of living. Excess deaths, which remained steady until 2020 (2018: 31,116; 2019: 31,134; 2020: 31,765) rising to 33,055 in 2021, 35,477 in 2022, 35,459 in 2023 and 35,173 in 2024. Cancer is also on the rise. We have the second highest rate in Europe as of 2022 (our Minister for Health’s office informed me that this was because we are so much better at recording than other nations). International events have further revealed the powerless of many nations and that the rule of law isn’t universal. There is no rules based order. There is only power and money and the golden rule is that those who have the gold rule!

Vaccine Injured

Amongst the flotsam and jetsam post-Covid are the inadequately accounted injured by these vaccines. They are deemed to be invisible, however, even inconvenient and regularly have their realities denied by the very people who created the problem. The medical profession is still clinging to the idea that they saved the world from the plague and are indignant that more gratitude hasn’t been shown.

The medical profession according to JAMA(Journal of the American Medical Association) has seen a 30% drop in public trust. This will have complex reasons behind it, but the combination of snout in trough and downright dishonesty will have contributed. Gaslighting those who were previously well and now cannot function after receiving Covid vaccines has only added to this.

People will reflect on the misuse of the Covid vaccines, the profits made and the lies told about its efficacy and safety, and wonder how many times these same scenarios played out in a greater or lesser form in the past.

After thirty years of practice, I simply can no longer engage with a profession that has been captured by an industry whose sole aim is profit. Most postgraduate medical training is paid for or delivered by the pharmaceutical industry. One has to question what are the priorities of an industry that spends $19 dollars on advertising and marketing for every dollar spent on research.

This results is a disease model rather than one that examines the root cause. The former results in conditions that coincidentally have pharmaceutical products as alleged solutions. This chronic disease approach rarely if ever returns a person to a state of health. With such an interventionist approach one can understand why around a quarter of a million people may die each year at the hands of the medical profession in the USA, and perhaps 5,000 per annum in Ireland. An emphasis on sleep, diet, breath and movement is unlikely to result in such carnage or in such vast profits.

The shifting of a paradigm is rarely easy to achieve, but it is doubly troublesome when the concepts are unfamiliar to the people one is seeing on a daily basis in practice. Not only have the medical profession been trained to view health through the lens of chronic disease but the population at large connect health this with pharmaceutical products. They receive this message from most hucksters who want you to buy their products/procedures/cleanses etc. So when it comes to the person taking control of their lives there is a gargantuan effort needed to shift many people’s locus of control from the external to the internal. And it can be financially risky to give a person agency over their own health.

Growing Awareness

Fortunately, there is a growing awareness that lifestyle is more than a sidebar to achieving health. Instead it is health. One aspect in particular has gained a wide interest recently, the issue of insulin resistance.

This is this concept that I now spend most of my consultations discussing with amenable patients. The subject can be as complex or as straight-forward as one wants to make it. Fundamentally, we do not need carbohydrates, another large industry – the misnamed ‘food industry’ – would disagree, but physiology says we don’t.

Up to 70% of the Western diet is composed of carbohydrates. Most of the items in our supermarket trollies are in packets with barcodes and usually contain a lot of carbohydrate, and worse still refined carbohydrates. These products are broken down into the main fuel of the body and in particular the brain, i.e. glucose. However many of these products contain fructose, or more precisely high fructose corn syrup, a substance that causes a great deal of problems for our mitochondria and subsequently our cells and energy levels. Most of the health problems that we develop are ‘energy’ problems. Using this term runs the risk of wandering into the land of ‘woo,’ but slowly the concept of energy deficits as a cause of many inflammatory conditions, such as diabetes, cancers and dementia is gaining traction.

Returning to insulin resistance. This is a phenomenon that occurs when we consume and create more glucose. Then our body habitus changes, i.e. we get more fat than muscle and we move less. We then need more insulin to regulate our glucose levels. And this is where current medical thinking creates the problem that it then goes on to profit from.

We measure glucose not insulin. Glucose stays within the normal range for decades before it rises above some arbitrary threshold to be called Type 2 diabetes mellitus. But insulin has been raised for decades resulting in high blood pressure, altered lipids, migraines, anxiety, depression, IBS, polycystic ovarian syndrome, dementia, cancer and insomnia to list but a few. All of these conditions are seen as separate problems when in fact they have a common treatable root cause.

Let me just clarify something at this stage. I am not saying that these complex conditions are solely caused by insulin resistance (IR), but IR is a fundamental feature and if more effort went into reducing IR through actual lifestyle changes then people could actually return to and maintain a state of good health.

Suicide

At the beginning of this article I alluded to how I died in 2022 and that was the death of this doctor. From that suicide attempt, an attempt precipitated by increasing dismay at the state of the world and my profession in particular, I have rejected many of the beliefs and gods of the past. I have found hope in taking an approach to both my lifestyle and that of my patients which actually has tangible results, and is not based on probabalistic forecasts. My own state of health is fundamental to how I practice medicine and is reflected in my consultation style and physical presence with my patients, and whether they ‘believe’ what I tell them until they see that it is or isn’t working for themselves. Then we rethink and try again. This is unlike the medical model that expects the patient to believe regardless of the almost inevitable side effects.

The physician needs to be and live in the state of health that they want the patient to obtain. Patients are driven by emotion and to some extent by optics not by rational argument. An overweight, flatulent and out-of-breath doctor is not going to promote anything healthy in his or her patients. They can, however, empathize with the pill for every ill model because they have clearly embraced that wholeheartedly.

The role of the doctor has declined in significance over time and will continue to do so with the evolution of more advanced AI models if doctors continue down the same road using the same disease model paradigms that are conveniently linked to pharmaceutical products. Instead, doctors need to revert to the model of the physicians of old, and perhaps once again let ‘food be thy medicine’ and be role models for their patients. Optics in today’s age of forever-on-screens is a useful adjunct, but the doctor-patient relationship untainted by influence from the pharmaceutical industry should still be the bedrock of the practice of medicine.

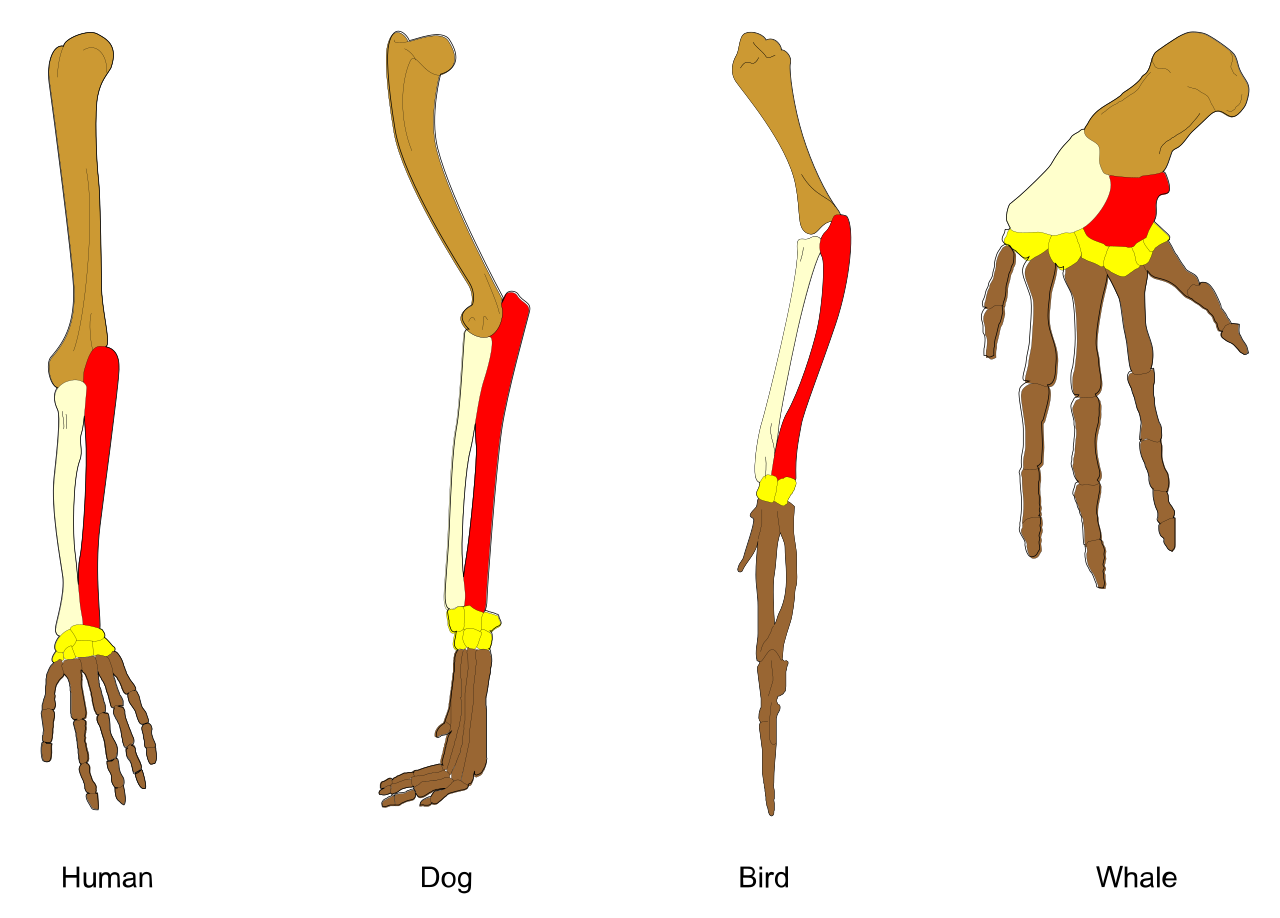

What is Evolution?

What is Evolution?